12

Kym teaches sixth grade students in an urban school where most of the families in the community live below the poverty line. Each year the majority of the students in her school fail the state-wide tests. Kym follows school district teaching guides and typically uses direct instruction in her Language Arts and Social Studies classes. The classroom assessments are designed to mirror those on the state-wide tests so the students become familiar with the assessment format. When Kym is in a graduate summer course on motivation she reads an article called, “Teaching strategies that honor and motivate inner-city African American students” (Teel, Debrin-Parecki, & Covington, 1998) and she decides to change her instruction and assessment in fall in four ways. First, she stresses an incremental approach to ability focusing on effort and allows students to revise their work several times until the criteria are met. Second, she gives students choices in performance assessments (e.g. oral presentation, art project, creative writing). Third, she encourages responsibility by asking students to assist in classroom tasks such as setting up video equipment, handing out papers etc. Fourth, she validates student’ cultural heritage by encouraging them to read biographies and historical fiction from their own cultural backgrounds. Kym reports that the changes in her students’ effort and demeanor in class are dramatic: students are more enthusiastic, work harder, and produce better products. At the end of the year twice as many of her students pass the State-wide test than the previous year.

Afterward. Kym still teaches sixth grade in the same school district and continues to modify the strategies described above. Even though the performance of the students she taught improved the school was closed because, on average, the students’ performance was poor. Kym gained a Ph.D and teaches Educational Psychology to preservice and inservice teachers in evening classes.

Kym’s story illustrates several themes related to assessment that we explore in this chapter on teacher-made assessment strategies and in the Chapter 13 on standardized testing. First, choosing effective classroom assessments is related to instructional practices, beliefs about motivation, and the presence of state-wide standardized testing. Second, some teacher-made classroom assessments enhance student learning and motivation

—some do not. Third, teachers can improve their teaching through action research. This involves identifying a problem (e.g. low motivation and achievement), learning about alternative approaches (e.g. reading the literature), implementing the new approaches, observing the results (e.g. students’ effort and test results), and continuing to modify the strategies based on their observations.

Best practices in assessing student learning have undergone dramatic changes in the last 20 years. When Rosemary was a mathematics teacher in the 1970s, she did not assess students’ learning she tested them on the mathematics knowledge and skills she taught during the previous weeks. The tests varied little format and students always did them individually with pencil and paper. Many teachers, including mathematics teachers, now use a wide variety of methods to determine what their students have learned and also use this assessment information to modify their instruction. In this chapter the focus is on using classroom assessments to improve student learning and we begin with some basic concepts.

Basic concepts

Assessment is an integrated process of gaining information about students’ learning and making value judgments about their progress (Linn & Miller, 2005). Information about students’ progress can be obtained from a variety of sources including projects, portfolios, performances, observations, and tests. The information about students’ learning is often assigned specific numbers or grades and this involves measurement. Measurement answers the question, “How much?” and is used most commonly when the teacher scores a test or product and assigns numbers (e.g. 28 /30 on the biology test; 90/100 on the science project). Evaluation is the process of making judgments about the assessment information (Airasian, 2005). These judgments may be about individual students (e.g. should Jacob’s course grade take into account his significant improvement over the grading period?), the assessment method used (e.g. is the multiple choice test a useful way to obtain information about problem solving), or one’s own teaching (e.g. most of the students this year did much better on the essay assignment than last year so my new teaching methods seem effective).

The primary focus in this chapter is on assessment for learning, where the priority is designing and using assessment strategies to enhance student learning and development. Assessment for learning is often formative assessment, i.e. it takes place during the course of instruction by providing information that teachers can use to revise their teaching and students can use to improve their learning (Black, Harrison, Lee, Marshall & Wiliam, 2004). Formative assessment includes both informal assessment involving spontaneous unsystematic observations of students’ behaviors (e.g. during a question and answer session or while the students are working on an assignment) and formal assessment involving pre-planned, systematic gathering of data. Assessment of learning is formal assessment that involves assessing students in order to certify their competence and fulfill accountability mandates and is the primary focus of the next chapter on standardized tests but is also considered in this chapter. Assessment of learning is typically summative, that is, administered after the instruction is completed (e.g. a final examination in an educational psychology course). Summative assessments provide information about how well students mastered the material, whether students are ready for the next unit, and what grades should be given (Airasian, 2005).

Assessment for learning: an overview of the process

Using assessment to advance students’ learning not just check on learning requires viewing assessment as a process that is integral to the all phases of teaching including planning, classroom interactions and instruction, communication with parents, and self-reflection (Stiggins, 2002). Essential steps in assessment for learning include:

Step 1: Having clear instructional goals and communicating them to students

In the previous chapter we documented the importance of teachers thinking carefully about the purposes of each lesson and unit. This may be hard for beginning teachers. For example, Vanessa, a middle school social studies teacher, might say that the goal of her next unit is: “Students will learn about the Cvil War.” Clearer goals require that Vanessa decides what it is about the US Civil War she wants her students to learn, e.g. the dates and names of battles, the causes of the US Civil War, the differing perspectives of those living in the North and the South, or the day-to-day experiences of soldiers fighting in the war. Vanessa cannot devise appropriate assessments of her students’ learning about the US Civil War until she is clear about her own purposes.

For effective teaching Vanessa also needs to communicate clearly the goals and objectives to her students so they know what is important for them to learn. No matter how thorough a teacher’s planning has been, if students do not know what they are supposed to learn they will not learn as much. Because communication is so important to teachers a specific chapter is devoted to this topic (Chapter 9), and so communication is not considered in any detail in this chapter.

Step 2: Selecting appropriate assessment techniques

Selecting and administrating assessment techniques that are appropriate for the goals of instruction as well as the developmental level of the students are crucial components of effective assessment for learning. Teachers need to know the characteristics of a wide variety of classroom assessment techniques and how these techniques can be adapted for various content, skills, and student characteristics. They also should understand the role reliability, validity, and the absence of bias should play is choosing and using assessment techniques. Much of this chapter focuses on this information.

Step 3: Using assessment to enhance motivation and confidence

Students’ motivation and confidence is influenced by the type of assessment used as well as the feedback given about the assessment results. Consider, Samantha a college student who takes a history class in which the professor’s lectures and text book focus on really interesting major themes. However, the assessments are all multiple choice tests that ask about facts and Samantha, who initially enjoys the classes and readings, becomes angry, loses confidence she can do well, and begins to spend less time on the class material. In contrast, some instructors have observed that that many students in educational psychology classes like the one you are now taking will work harder on assessments that are case studies rather than more traditional exams or essays. The type of feedback provided to students is also important and we elaborate on these ideas later in this chapter.

Step 4: Adjusting instruction based on information

An essential component of assessment for learning is that the teacher uses the information gained from assessment to adjust instruction. These adjustments occur in the middle of a lesson when a teacher may decide that students’ responses to questions indicate sufficient understanding to introduce a new topic, or that her observations of students’ behavior indicates that they do not understand the assignment and so need further explanation. Adjustments also occur when the teacher reflects on the instruction after the lesson is over and is planning for the next day. We provide examples of adjusting instruction in this chapter and consider teacher reflection in more detail in Chapter 2..

Step 5: Communicating with parents and guardians

Students’ learning and development is enhanced when teachers communicate with parents regularly about their children’s performance. Teachers communicate with parents in a variety of ways including newsletters, telephone conversations, email, school district websites and parent-teachers conferences. Effective communication requires that teachers can clearly explain the purpose and characteristics of the assessment as well as the meaning of students’ performance. This requires a thorough knowledge of the types and purposes of teacher made and standardized assessments (this chapter and Chapter 13) and well as clear communication skills (Chapter 9).

We now consider each step in the process of assessment for learning in more detail. In order to be able to select and administer appropriate assessment techniques teachers need to know about the variety of techniques that can be used as well as what factors ensure that the assessment techniques are high quality. We begin by considering high quality assessments.

Selecting appropriate assessment techniques I: high quality assessments

For an assessment to be high quality it needs to have good validity and reliability as well as absence from bias.

Validity

Validity is the evaluation of the “adequacy and appropriateness of the interpretations and uses of assessment results” for a given group of individuals (Linn & Miller, 2005, p. 68). For example, is it appropriate to conclude that the results of a mathematics test on fractions given to recent immigrants accurately represents their understanding of fractions? Is it appropriate for the teacher to conclude, based on her observations, that a kindergarten student, Jasmine, has Attention Deficit Disorder because she does not follow the teachers oral instructions? Obviously in each situation other interpretations are possible that the immigrant students have poor English skills rather than mathematics skills, or that Jasmine may be hearing impaired.

It is important to understand that validity refers to the interpretation and uses made of the results of an assessment procedure not of the assessment procedure itself. For example, making judgments about the results of the same test on fractions may be valid if the students all understand English well. A teacher concluding from her observations that the kindergarten student has Attention Deficit Disorder (ADD) may be appropriate if the student has been screened for hearing and other disorders (although the classification of a disorder like ADD cannot be made by one teacher). Validity involves making an overall judgment of the degree to which the interpretations and uses of the assessment results are justified. Validity is a matter of degree (e.g. high, moderate, or low validity) rather than all-or none (e.g. totally valid vs invalid) (Linn & Miller, 2005).

Three sources of evidence are considered when assessing validity—content, construct and predictive. Content validity evidence is associated with the question: How well does the assessment include the content or tasks it is supposed to? For example, suppose your educational psychology instructor devises a mid-term test and tells you this includes chapters one to seven in the text book. Obviously, all the items in test should be based on the content from educational psychology, not your methods or cultural foundations classes. Also, the items in the test should cover content from all seven chapters and not just chapters three to seven—unless the instructor tells you that these chapters have priority.

Teachers’ have to be clear about their purposes and priorities for instruction before they can begin to gather evidence related content validity. Content validation determines the degree that assessment tasks are relevant and representative of the tasks judged by the teacher (or test developer) to represent their goals and objectives (Linn & Miller, 2005). It is important for teachers to think about content validation when devising assessment tasks and one way to help do this is to devise a Table of Specifications. An example, based on Pennsylvania’s State standards for grade 3 geography, is in . In the left hand column is the instructional content for a 20-item test the teacher has decided to construct with two kinds of instructional objectives: identification and uses or locates. The second and third columns identify the number of items for each content area and each instructional objective. Notice that the teacher has decided that six items should be devoted to the sub area of geographic representations- more than any other sub area. Devising a table of specifications helps teachers determine if some content areas or concepts are over-sampled (i.e. there are too many items) and some concepts are under-sampled (i.e. there are too few items).

Table 35: Example of Table of Specifications: grade 3 basic geography literacy

|

Content |

Instructional objective IdentifiesUses or locates |

Total number of items |

Per cent of items |

|

|

Identify geography tools and their uses Geographic representations: e.g. maps, globe, diagrams and photographs Spatial information: sketch & thematic maps Mental maps |

3 1 1 |

3 1 1 |

6 2 2 |

30% 10% 10% |

|

Identify and locate places and regions Physical features (e.g. lakes, continents) Human features (countries, states, cities) Regions with unifying geographic characteristics e.g. river basins |

1 3 1 |

2 2 1 |

3 5 2 |

15% 25% 10% |

|

Number of items |

10 |

10 |

20 |

|

|

Percentage of items |

50% |

50% |

|

100% |

Construct validity evidence is more complex than content validity evidence. Often we are interested in making broader judgments about student’s performances than specific skills such as doing fractions. The focus may be on constructs such as mathematical reasoning or reading comprehension. A construct is a characteristic of a person we assume exists to help explain behavior. For example, we use the concept of test anxiety to explain why some individuals when taking a test have difficulty concentrating, have physiological reactions such as sweating, and perform poorly on tests but not in class assignments. Similarly mathematics reasoning and reading comprehension are constructs as we use them to help explain performance on an assessment. Construct validation is the process of determining the extent to which performance on an assessment can be interpreted in terms of the intended constructs and is not influenced by factors irrelevant to the construct. For example, judgments about recent immigrants’ performance on a mathematical reasoning test administered in English will have low construct validity if the results are influenced by English language skills that are irrelevant to mathematical problem solving. Similarly, construct validity of end-of-semester examinations is likely to be poor for those students who are highly anxious when taking major tests but not during regular class periods or when doing assignments. Teachers can help increase construct validity by trying to reduce factors that influence performance but are irrelevant to the construct being assessed. These factors include anxiety, English language skills, and reading speed (Linn & Miller 2005).

A third form of validity evidence is called criterion-related validity. Selective colleges in the USA use the ACT or SAT among other criteria to choose who will be admitted because these standardized tests help predict freshman grades, i.e. have high criterion-related validity. Some K-12 schools give students math or reading tests in the fall semester in order to predict which are likely to do well on the annual state tests administered in the spring semester and which students are unlikely to pass the tests and will need additional assistance. If the tests administered in fall do not predict students’ performances accurately then the additional assistance may be given to the wrong students illustrating the importance of criterion-related validity.

Reliability

Reliability refers to the consistency of the measurement (Linn & Miller 2005). Suppose Mr Garcia is teaching a unit on food chemistry in his tenth grade class and gives an assessment at the end of the unit using test items from the teachers’ guide. Reliability is related to questions such as: How similar would the scores of the students be if they had taken the assessment on a Friday or Monday? Would the scores have varied if Mr Garcia had selected different test items, or if a different teacher had graded the test? An assessment provides information about students by using a specific measure of performance at one particular time. Unless the results from the assessment are reasonably consistent over different occasions, different raters, or different tasks (in the same content domain) confidence in the results will be low and so cannot be useful in improving student learning.

Obviously we cannot expect perfect consistency. Students’ memory, attention, fatigue, effort, and anxiety fluctuate and so influence performance. Even trained raters vary somewhat when grading assessment such as essays, a science project, or an oral presentation. Also, the wording and design of specific items influence students’ performances. However, some assessments are more reliable than others and there are several strategies teachers can use to increase reliability.

First, assessments with more tasks or items typically have higher reliability. To understand this, consider two tests one with five items and one with 50 items. Chance factors influence the shorter test more then the longer test. If a student does not understand one of the items in the first test the total score is very highly influenced (it would be reduced by 20 per cent). In contrast, if there was one item in the test with 50 items that were confusing, the total score would be influenced much less (by only 2 percent). Obviously this does not mean that assessments should be inordinately long, but, on average, enough tasks should be included to reduce the influence of chance variations. Second, clear directions and tasks help increase reliability. If the directions or wording of specific tasks or items are unclear, then students have to guess what they mean undermining the accuracy of their results. Third, clear scoring criteria are crucial in ensuring high reliability (Linn & Miller, 2005). Later in this chapter we describe strategies for developing scoring criteria for a variety of types of assessment.

Absence of bias

Bias occurs in assessment when there are components in the assessment method or administration of the assessment that distort the performance of the student because of their personal characteristics such as gender, ethnicity, or social class (Popham, 2005). Two types of assessment bias are important: offensiveness and unfair penalization. An assessment is most likely to be offensive to a subgroup of students when negative stereotypes are included in the test. For example, the assessment in a health class could include items in which all the doctors were men and all the nurses were women. Or, a series of questions in a social studies class could portray Latinos and Asians as immigrants rather than native born Americans. In these examples, some female, Latino or Asian students are likely to be offended by the stereotypes and this can distract them from performing well on the assessment.

Unfair penalization occurs when items disadvantage one group not because they may be offensive but because of differential background experiences. For example, an item for math assessment that assumes knowledge of a particular sport may disadvantage groups not as familiar with that sport (e.g. American football for recent immigrants). Or an assessment on team work that asks students to model their concept of a team on a symphony orchestra is likely to be easier for those students who have attended orchestra performances—probably students from affluent families. Unfair penalization does not occur just because some students do poorly in class. For example, asking questions about a specific sport in a physical education class when information on that sport had been discussed in class is not unfair penalization as long as the questions do not require knowledge beyond that taught in class that some groups are less likely to have.

It can be difficult for new teachers teaching in multi-ethnic classrooms to devise interesting assessments that do not penalize any groups of students. Teachers need to think seriously about the impact of students’ differing backgrounds on the assessment they use in class. Listening carefully to what students say is important as is learning about the backgrounds of the students.

Selecting appropriate assessment techniques II: types of teacher-made assessments

One of the challenges for beginning teachers is to select and use appropriate assessment techniques. In this section we summarize the wide variety of types of assessments that classroom teachers use. First we discuss the informal techniques teachers use during instruction that typically require instantaneous decisions. Then we consider formal assessment techniques that teachers plan before instruction and allow for reflective decisions.

Teachers’ observation, questioning, and record keeping

During teaching, teachers not only have to communicate the information they planned but also continuously monitor students’ learning and motivation in order to determine whether modifications have to be made (Airasian, 2005). Beginning teachers find this more difficult than experienced teachers because of the complex cognitive skills required to improvise and be responsive to students needs while simultaneously keeping in mind the goals and plans of the lesson (Borko & Livingston, 1989). The informal assessment strategies teachers most often use during instruction are observation and questioning.

Observation

Effective teachers observe their students from the time they enter the classroom. Some teachers greet their students at the door not only to welcome them but also to observe their mood and motivation. Are Hannah and Naomi still not talking to each other? Does Ethan have his materials with him? Gaining information on such questions can help the teacher foster student learning more effectively (e.g. suggesting Ethan goes back to his locker to get his materials before the bell rings or avoiding assigning Hannah and Naomi to the same group).

During instruction, teachers observe students’ behavior to gain information about students’ level of interest and understanding of the material or activity. Observation includes looking at non-verbal behaviors as well as listening to what the students are saying. For example, a teacher may observe that a number of students are looking out of the window rather than watching the science demonstration, or a teacher may hear students making comments in their group indicating they do not understand what they are supposed to be doing. Observations also help teachers decide which student to call on next, whether to speed up or slow down the pace of the lesson, when more examples are needed, whether to begin or end an activity, how well students are performing a physical activity, and if there are potential behavior problems (Airasian, 2005). Many teachers find that moving around the classroom helps them observe more effectively because they can see more students from a variety of perspectives. However, the fast pace and complexity of most classrooms makes it difficult for teachers to gain as much information as they want.

Questioning

Teachers ask questions for many instructional reasons including keeping students’ attention on the lesson, highlighting important points and ideas, promoting critical thinking, allowing students’ to learn from each others answers, and providing information about students’ learning. Devising good appropriate questions and using students’ responses to make effective instantaneous instructional decisions is very difficult. Some strategies to improve questioning include planning and writing down the instructional questions that will be asked, allowing sufficient wait time for students to respond, listening carefully to what students say rather than listening for what is expected, varying the types of questions asked, making sure some of the questions are higher level, and asking follow-up questions.

While the informal assessment based on spontaneous observation and questioning is essential for teaching there are inherent problems with the validity, reliability and bias in this information (Airasian, 2005; Stiggins 2005). We summarize these issues and some ways to reduce the problems in Table 35.

Table 36: Validity and reliability of observation and questioning

|

Problem |

Strategies to alleviate problem |

|

Teachers lack of objectivity about overall class involvement and understanding |

Try to make sure you are not only seeing what you want to see. Teachers typically want to feel good about their instruction so it is easy to look for positive student interactions. Occasionally, teachers want to see negative student reactions to confirm their beliefs about an individual student or class. |

|

Tendency to focus on process rather than learning |

Remember to concentrate on student learning not just involvement. Most of teachers’ observations focus on process—student attention, facial expressions posture—rather than pupil learning. Students can be active and engaged but not developing new skills. |

|

Limited information and selective sampling |

Make sure you observe a variety of students—not just those who are typically very good or very bad. Walk around the room to observe more students “up close” and view the room from multiple perspectives. Call on a wide variety of students—not just those with their hands up, or those who are skilled as the subject, or those who sit in a particular place in the room. Keep records |

|

Fast pace of classrooms inhibits corroborative evidence |

If you want to know if you are missing important information ask a peer to visit your classroom and observe the students’ behaviors. Classrooms are complex and fast paced and one teacher cannot see much of what is going on while trying to also teach. |

|

Cultural and individual differences in the meaning of verbal and non verbal behaviors |

Be cautious in the conclusions that you draw from your observations and questions. Remember that the meaning and expectations of certain types of questions, wait time, social distance, and role of “small talk” varies across cultures (Chapter 5). Some students are quiet because of their personalities not because they are uninvolved, nor keeping up with the lesson, nor depressed or tired. |

Record keeping

Keeping records of observations improves reliability and can be used to enhance understanding of one student, a group, or the whole class’ interactions. Sometimes this requires help from other teachers. For example, Alexis, a beginning science teacher is aware of the research documenting that longer wait time enhances students’ learning (e.g. Rowe, 2003) but is unsure of her behaviors so she asks a colleague to observe and record her wait times during one class period. Alexis learns her wait times are very short for all students so she starts practicing silently counting to five whenever she asks students a question.

Teachers can keep anecdotal records about students without help from peers. These records contain descriptions of incidents of a student’s behavior, the time and place the incident takes place, and a tentative interpretation of the incident. For example, the description of the incident might involve Joseph, a second grade student, who fell asleep during the mathematics class on a Monday morning. A tentative interpretation could be the student did not get enough sleep over the weekend, but alternative explanations could be the student is sick or is on medications that make him drowsy. Obviously additional information is needed and the teacher could ask Joseph why he is so sleepy and also observe him to see if he looks tired and sleepy over the next couple of weeks.

Anecdotal records often provide important information and are better than relying on one’s memory but they take time to maintain and it is difficult for teachers to be objective. For example, after seeing Joseph fall asleep the teacher may now look for any signs of Joseph’s sleepiness—ignoring the days he is not sleepy. Also, it is hard for teachers to sample a wide enough range of data for their observations to be highly reliable.

Teachers also conduct more formal observations especially for students with special needs who have IEP’s. An example of the importance of informal and formal observations in a preschool follows:

The class of preschoolers in a suburban neighborhood of a large city has eight special needs students and four students—the peer models—who have been selected because of their well developed language and social skills. Some of the special needs students have been diagnosed with delayed language, some with behavior disorders, and several with autism. The students are sitting on the mat with the teacher who has a box with sets of three “cool” things of varying size (e.g. toy pandas) and the students are asked to put the things in order by size, big, medium and small. Students who are able are also requested to point to each item in turn and say “This is the big one”, “This is the medium one” and “This is the little one”. For some students, only two choices (big and little) are offered because that is appropriate for their developmental level. The teacher informally observes that one of the boys is having trouble keeping his legs still so she quietly asks the aid for a weighted pad that she places on the boy’s legs to help him keep them still. The activity continues and the aide carefully observes students behaviors and records on IEP progress cards whether a child meets specific objectives such as: “When given two picture or object choices, Mark will point to the appropriate object in 80 per cent of the opportunities.” The teacher and aides keep records of the relevant behavior of the special needs students during the half day they are in preschool. The daily records are summarized weekly. If there are not enough observations that have been recorded for a specific objective, the teacher and aide focus their observations more on that child, and if necessary, try to create specific situations that relate to that objective. At end of each month the teacher calculates whether the special needs children are meeting their IEP objectives.

Selected response items

Common formal assessment formats used by teachers are multiple choice, matching, and true/false items. In selected response items students have to select a response provided by the teacher or test developer rather than constructing a response in their own words or actions. Selected response items do not require that students recall the information but rather recognize the correct answer. Tests with these items are called objective because the results are not influenced by scorers’ judgments or interpretations and so are often machine scored. Eliminating potential errors in scoring increases the reliability of tests but teachers who only use objective tests are liable to reduce the validity of their assessment because objective tests are not appropriate for all learning goals (Linn & Miller, 2005). Effective assessment for learning as well as assessment of learning must be based on aligning the assessment technique to the learning goals and outcomes.

For example, if the goal is for students to conduct an experiment then they should be asked to do that rather that than being asked about conducting an experiment.

Common problems

Selected response items are easy to score but are hard to devise. Teachers often do not spend enough time constructing items and common problems include:

- Unclear wording in the items

- True or False: Although George Washington was born into a wealthy family, his father died when he was only 11, he worked as a youth as a surveyor of rural lands, and later stood on the balcony of Federal Hall in New York when he took his oath of office in 1789.

- Cues that are not related the content being examined.

- A common clue is that all the true statements on a true/false test or the corrective alternatives on a multiple choice test are longer than the untrue statements or the incorrect alternatives.

- Using negatives (or double negatives) the items.

- A poor item. “True or False: None of the steps made by the student was unnecessary.”

- A better item. True or False: “All of the steps were necessary.”

Students often do not notice the negative terms or find them confusing so avoiding them is generally recommended (Linn & Miller 2005). However, since standardized tests often use negative items, teachers sometimes deliberately include some negative items to give students practice in responding to that format.

- Taking sentences directly from textbook or lecture notes.

- Removing the words from their context often makes them ambiguous or can change the meaning. For example, a statement from Chapter 4 taken out of context suggests all children are clumsy. “Similarly with jumping, throwing and catching: the large majority of children can do these things, though often a bit clumsily.” A fuller quotation makes it clearer that this sentence refers to 5-year-olds: For some fives, running still looks a bit like a hurried walk, but usually it becomes more coordinated within a year or two. Similarly with jumping, throwing and catching: the large majority of children can do these things, though often a bit clumsily, by the time they start school, and most improve their skills noticeably during the early elementary years.” If the abbreviated form was used as the stem in a true/false item it would obviously be misleading.

- Avoid trivial questions

- e.g. Jean Piaget was born in what year?

a) 1896

b) 1900

c) 1880

d) 1903

While it important to know approximately when Piaget made his seminal contributions to the understanding of child development, the exact year of his birth (1880) is not important.

Strengths and weaknesses

All types of selected response items have a number of strengths and weaknesses. True/False items are appropriate for measuring factual knowledge such as vocabulary, formulae, dates, proper names, and technical terms. They are very efficient as they use a simple structure that students can easily understand, and take little time to complete. They are also easier to construct than multiple choice and matching items. However, students have a 50 per cent probability of getting the answer correct through guessing so it can be difficult to interpret how much students know from their test scores. Examples of common problems that arise when devising true/false items are in Table 37.

Table 37: Common errors in selected response items

|

Type of item |

Common errors |

Example |

||

|

True False |

The statement is not absolutely true—typically because it contains a broad generalization. |

T F The President of the United States is elected to that office. This is usually true but the US Vice President can succeed the President. |

||

|

|

The item is opinion not fact . |

T F Education for K-12 students is improved though policies that support charter schools. Some people believe this, some do not. |

||

|

Two ideas are included in item |

T F George H Bush the 40th president of the US was defeated by William Jefferson Clinton in 1992. The 1st idea is false; the 2nd is true making it difficult for students to decide whether to circle T or F. |

|||

|

Irrelevant cues |

T FThe President of the United States is usually elected to that office. True items contain the words such as usually generally; whereas false items contain the terms such as always, all, never. |

|||

|

Matching |

Columns do not contain homogeneous information |

Directions: On the line to the US Civil War Battle write the year or confederate general in Column B.

Column B is a mixture of generals and dates. |

||

|

Too many items in each list |

Lists should be relatively short (4 – 7) in each column. More than 10 are too confusing. |

|||

|

Responses are not in logical order |

In the example with Spanish and English words should be in a logical order (they are alphabetical). If the order is not logical, student spend too much time searching for the correct answer. |

|||

|

Multiple Choice |

Problem (i.e. the stem) is not clearly stated problem |

New Zealand a) Is the worlds’ smallest continent b) Is home to the kangaroo c) Was settled mainly by colonists from Great Britain d) Is a dictatorship This is really a series of true-false items. Because the correct answer is c) a better version with the problem in the stem is Much of New Zealand was settled by colonists from a) Great Britain b) Spain c) France d) Holland |

||

|

|

Some of the alternatives are not plausible |

Who is best known for their work on the development of the morality of justice.

Obviously Gerald Ford is not a plausible alternative. |

||

|

|

Irrelevant cues Use of “All of above” |

|

In matching items, two parallel columns containing terms, phrases, symbols, or numbers are presented and the student is asked to match the items in the first column with those in the second column. Typically there are more items in the second column to make the task more difficult and to ensure that if a student makes one error they do not have to make another. Matching items most often are used to measure lower level knowledge such as persons and their achievements, dates and historical events, terms and definitions, symbols and concepts, plants or animals and classifications (Linn & Miller, 2005). An example with Spanish language words and their English equivalents is below:

Directions: On the line to the left of the Spanish word in Column A, write the letter of the English word in Column B that has the same meaning.

| Column A

|

Column B |

|

1. Casa 2. Bebé 3. Gata 4. Perro 5. Hermano |

A. Aunt B. Baby C. Brother D. Cat E. Dog F. Father G. House |

While matching items may seem easy to devise it is hard to create homogenous lists. Other problems with matching items and suggested remedies are in Table 37.

Multiple Choice items are the most commonly used type of objective test items because they have a number of advantages over other objective test items. Most importantly they can be adapted to assess higher levels thinking such as application as well as lower level factual knowledge. The first example below assesses knowledge of a specific fact whereas the second example assesses application of knowledge.

Who is best known for their work on the development of the morality of justice?

a. Erikson

b. Vygotsky

c. Maslow

d. Kohlberg

Which one of the following best illustrates the law of diminishing returns?

a. A factory doubled its labor force and increased production by 50 per cent

b. The demand for an electronic product increased faster than the supply of the product

c. The population of a country increased faster than agricultural self sufficiency

d. A machine decreased in efficacy as its parts became worn out (Adapted from Linn and Miller 2005, p, 193).

There are several other advantages of multiple choice items. Students have to recognize the correct answer not just know the incorrect answer as they do in true/false items. Also, the opportunity for guessing is reduced because four or five alternatives are usually provided whereas in true/false items students only have to choose between two choices. Also, multiple choice items do not need homogeneous material as matching items do.

However, creating good multiple choice test items is difficult and students (maybe including you) often become frustrated when taking a test with poor multiple choice items. Three steps have to be considered when constructing a multiple choice item: formulating a clearly stated problem, identifying plausible alternatives, and removing irrelevant clues to the answer. Common problems in each of these steps are summarized in Table 38

Constructed response items

Formal assessment also includes constructed response items in which students are asked to recall information and create an answer—not just recognize if the answer is correct—so guessing is reduced. Constructed response items can be used to assess a wide variety of kinds of knowledge and two major kinds are discussed: completion or short answer (also called short response) and extended response.

Completion and short answer

Completion and short answer items can be answered in a word, phrase, number, or symbol. These types of items are essentially the same only varying in whether the problem is presented as a statement or a question (Linn & Miller 2005). For example:

Completion: The first traffic light in the US was invented by…………….

Short Answer: Who invented the first traffic light in the US?

These items are often used in mathematics tests, e.g.

3 + 10 = …………..?

If x = 6, what does x(x-1) =……….

Draw the line of symmetry on the following shape ![]()

A major advantage of these items is they that they are easy to construct. However, apart from their use in mathematics they are unsuitable for measuring complex learning outcomes and are often difficult to score. Completion and short answer tests are sometimes called objective tests as the intent is that there is only one correct answer and so there is no variability in scoring but unless the question is phrased very carefully, there are frequently a variety of correct answers. For example, consider the item

Where was President Lincoln born?………………..

The teacher may expect the answer “in a log cabin” but other correct answers are also “on Sinking Spring Farm”, “in Hardin County” or “in Kentucky”. Common errors in these items are summarized in Table 38.

Table 38: Common errors in constructed response items

|

Type of item |

Common errors |

Example |

|

Completion and short answer |

There is more than one possible answer. |

e.g. Where was US President Lincoln born? The answer could be in a log cabin, in Kentucky etc. |

|

|

Too many blanks are in the completion item so it is too difficult or doesn’t make sense. |

e.g. In ….. theory, the first stage,is when infants process through their ……. and ….. ……… |

|

Clues are given by length of blanks in completion items. |

e.g. Three states are contiguous to New Hampshire: . ….is to the West, ……is to the East andis to the South. |

|

|

Extended Response |

Ambiguous questions |

e.g. Was the US Civil War avoidable? Students could interpret this question in a wide variety of ways, perhaps even stating “yes” or “no”. One student may discuss only political causes another moral, political and economic causes. There is no guidance in the question for students. |

|

Poor reliability in grading |

The teacher does not use a scoring rubric and so is inconsistent in how he scores answers especially unexpected responses, irrelevant information, and grammatical errors. |

|

|

Perception of student influences grading |

By spring semester the teacher has developed expectations of each student’s performance and this influences the grading (numbers can be used instead of names). The test consists of three constructed responses and the teacher grades the three answers on each students’ paper before moving to the next paper. This means that the grading of questions 2 and 3 are influenced by the answers to question 1 (teachers should grade all the 1st question then the 2nd etc). |

|

|

Choices are given on the test and some answers are easier than others. |

Testing experts recommend not giving choices in tests because then students are not really taking the same test creating equity problems. |

Extended response

Extended response items are used in many content areas and answers may vary in length from a paragraph to several pages. Questions that require longer responses are often called essay questions. Extended response items have several advantages and the most important is their adaptability for measuring complex learning outcomes— particularly integration and application. These items also require that students write and therefore provide teachers a way to assess writing skills. A commonly cited advantage to these items is their ease in construction; however, carefully worded items that are related to learning outcomes and assess complex learning are hard to devise (Linn & Miller, 2005). Well-constructed items phrase the question so the task of the student is clear. Often this involves providing hints or planning notes. In the first example below the actual question is clear not only because of the wording but because of the format (i.e. it is placed in a box). In the second and third examples planning notes are provided:

Example 1: Third grade mathematics:

The owner of a bookstore gave 14 books to the school. The principal will give an equal number of books to each of three classrooms and the remaining books to the school library. How many books could the principal give to each student and the school?

Show all your work on the space below and on the next page. Explain in words how you found the answer. Tell why you took the steps you did to solve the problem.

From Illinois Standards Achievement Test, 2006; (http://www.isbe.state.il.us/assessment/isat.htm)

Example 2: Fifth grade science: The grass is always greener

Jose and Maria noticed three different types of soil, black soil, sand, and clay, were found in their neighborhood. They decided to investigate the question, “How does the type of soil (black soil, sand, and clay) under grass sod affect the height of grass?”

Plan an investigation that could answer their new question. In your plan, be sure to include:

- Prediction of the outcome of the investigation

- Materials needed to do the investigation

- Procedure that includes:

- logical steps to do the investigation

- one variable kept the same (controlled)

- one variable changed (manipulated)

- any variables being measure and recorded

-

- how often measurements are taken and recorded

(From Washington State 2004 assessment of student learning) http://www.k12.wa.us/assessment/WASL/default.aspx)

Example 3: Grades 9-11 English:

Writing prompt

Some people think that schools should teach students how to cook. Other people think that cooking is something that ought to be taught in the home. What do you think? Explain why you think as you do.

Planning notes

Choose One:

- I think schools should teach students how to cook

- I think cooking should l be taught in the home

I think cooking should be taught in _____________________because________________

(school) or (the home)

(From Illinois Measure of Annual Growth in English http://www.isbe.state.il.us/assessment/image.htm)

A major disadvantage of extended response items is the difficulty in reliable scoring. Not only do various teachers score the same response differently but also the same teacher may score the identical response differently on various occasions (Linn & Miller 2005). A variety of steps can be taken to improve the reliability and validity of scoring. First, teachers should begin by writing an outline of a model answer. This helps make it clear what students are expected to include. Second, a sample of the answers should be read. This assists in determining what the students can do and if there are any common misconceptions arising from the question. Third, teachers have to decide what to do about irrelevant information that is included (e.g. is it ignored or are students penalized) and how to evaluate mechanical errors such as grammar and spelling. Then, a point scoring or a scoring rubric should be used.

In point scoring components of the answer are assigned points. For example, if students were asked: What are the nature, symptoms, and risk factors of hyperthermia?

Point Scoring Guide:

|

Definition (natures) Symptoms (1 pt for each) Risk Factors (1 point for each) Writing |

2 pts 5 pts 5 pts 3 pts |

This provides some guidance for evaluation and helps consistency but point scoring systems often lead the teacher to focus on facts (e.g. naming risk factors) rather than higher level thinking that may undermine the validity of the assessment if the teachers’ purposes include higher level thinking. A better approach is to use a scoring rubric that describes the quality of the answer or performance at each level.

Scoring rubrics

Scoring rubrics can be holistic or analytical. In holistic scoring rubrics, general descriptions of performance are made and a single overall score is obtained. An example from grade 2 language arts in Los Angeles Unified School District classifies responses into four levels: not proficient, partially proficient, proficient and advanced is on Table 39.

Table 39: Example of holistic scoring rubric: English language arts grade 2

|

Assignment. Write about an interesting, fun, or exciting story you have read in class this year. Some of the things you could write about are:

In your writing make sure you use facts and details from the story to describe everything clearly. After you write about the story, explain what makes the story interesting, fun or exciting. |

|

|

Scoring rubric |

|

|

Advanced Score 4 |

The response demonstrates well-developed reading comprehension skills. Major story elements (plot, setting, or characters) are clearly and accurately described. Statements about the plot, setting, or characters are arranged in a manner that makes sense. Ideas or judgments (why the story is interesting, fun, or exciting) are clearly supported or explained with facts and details from the story. |

|

Proficient Score 3 |

The response demonstrates solid reading comprehension skills. Most statements about the plot, setting, or characters are clearly described. Most statements about the plot, setting, or characters are arranged in a manner that makes sense. Ideas or judgments are supported with facts and details from the story. |

|

Partially Proficient Score 1 |

The response demonstrates some reading comprehension skills There is an attempt to describe the plot, setting, or characters Some statements about the plot, setting, or characters are arranged in a manner that makes sense. Ideas or judgments may be supported with some facts and details from the story. |

|

Not Proficient Score 1 |

The response demonstrates little or no skill in reading comprehension. The plot, setting, or characters are not described, or the description is unclear. Statements about the plot, setting, or characters are not arranged in a manner that makes sense. Ideas or judgments are not stated, and facts and details from the text are not used. |

|

Source: Adapted from English Language Arts Grade 2 Los Angeles Unified School District, 2001 (http://www.cse.ucla.edu/resources/justforteachers_set.htm) |

|

Analytical rubrics provide descriptions of levels of student performance on a variety of characteristics. For example, six characteristics used for assessing writing developed by the Northwest Regional Education Laboratory (NWREL) are:

-

-

- ideas and content

- organization

- voice

- word choice

- sentence fluency

- conventions

-

Descriptions of high, medium, and low responses for each characteristic are available from: http://www.nwrel.org/assessment/toolkit98/traits/index.html).

Holistic rubrics have the advantages that they can be developed more quickly than analytical rubrics. They are also faster to use as there is only one dimension to examine. However, they do not provide students feedback about which aspects of the response are strong and which aspects need improvement (Linn & Miller, 2005). This means they are less useful for assessment for learning. An important use of rubrics is to use them as teaching tools and provide them to students before the assessment so they know what knowledge and skills are expected.

Teachers can use scoring rubrics as part of instruction by giving students the rubric during instruction, providing several responses, and analyzing these responses in terms of the rubric. For example, use of accurate terminology is one dimension of the science rubric in Table 40. An elementary science teacher could discuss why it is important for scientists to use accurate terminology, give examples of inaccurate and accurate terminology, provide that component of the scoring rubric to students, distribute some examples of student responses (maybe from former students), and then discuss how these responses would be classified according to the rubric. This strategy of assessment for learning should be more effective if the teacher (a) emphasizes to students why using accurate terminology is important when learning science rather than how to get a good grade on the test (we provide more details about this in the section on motivation later in this chapter); (b) provides an exemplary response so students can see a model; and (c) emphasizes that the goal is student improvement on this skill not ranking students.

Table 40: Example of a scoring rubric, Science

*On the High School Assessment, the application of a concept to a practical problem or real-world situation will be scored when it is required in the response and requested in the item stem.

|

|

Level of understanding |

Use of accurate scientific terminology |

Use of supporting details |

Synthesis of information |

Application of information* |

|

4 |

There is evidence in the response that the student has a full and complete understanding. |

The use of accurate scientific terminology enhances the response. |

Pertinent and complete supporting details demonstrate an integration of ideas. |

The response reflects a complete synthesis of information. |

An effective application of the concept to a practical problem or real-world situation reveals an insight into scientific principles. |

|

3 |

There is evidence in the response that the student has a good understanding. |

The use of accurate scientific terminology strengthens the response. |

The supporting details are generally complete. |

The response reflects some synthesis of information. |

The concept has been applied to a practical problem or real- world situation. |

|

2 |

There is evidence in the response that the student has a basic understanding. |

The use of accurate scientific terminology may be present in the response. |

The supporting details are adequate. |

The response provides little or no synthesis of information. |

The application of the concept to a practical problem or real-world situation is inadequate. |

|

1 |

There is evidence in the response that the student has some understanding. |

The use of accurate scientific terminology is not present in the response. |

The supporting details are only minimally effective. |

The response addresses the question. |

The application, if attempted, is irrelevant. |

|

0 |

The student has NO UNDERSTANDING of the question or problem. The response is completely incorrect or irrelevant. |

||||

Performance assessments

Typically in performance assessments students complete a specific task while teachers observe the process or procedure (e.g. data collection in an experiment) as well as the product (e.g. completed report) (Popham, 2005; Stiggens, 2005). The tasks that students complete in performance assessments are not simple—in contrast to selected response items—and include the following:

-

-

- playing a musical instrument

- athletic skills

- artistic creation

- conversing in a foreign language

- engaging in a debate about political issues

- conducting an experiment in science

- repairing a machine

- writing a term paper

- using interaction skills to play together

-

These examples all involve complex skills but illustrate that the term performance assessment is used in a variety of ways. For example, the teacher may not observe all of the process (e.g. she sees a draft paper but the final product is written during out-of-school hours) and essay tests are typically classified as performance assessments (Airasian, 2000). In addition, in some performance assessments there may be no clear product (e.g. the performance may be group interaction skills).

Two related terms, alternative assessment and authentic assessment are sometimes used instead of performance assessment but they have different meanings (Linn & Miller, 2005). Alternative assessment refers to tasks that are not pencil-and-paper and while many performance assessments are not pencil-and paper tasks some are (e.g. writing a term paper, essay tests). Authentic assessment is used to describe tasks that students do that are similar to those in the “real world”. Classroom tasks vary in level of authenticity (Popham, 2005). For example, a Japanese language class taught in a high school in Chicago conversing in Japanese in Tokyo is highly authentic— but only possible in a study abroad program or trip to Japan. Conversing in Japanese with native Japanese speakers in Chicago is also highly authentic, and conversing with the teacher in Japanese during class is moderately authentic. Much less authentic is a matching test on English and Japanese words. In a language arts class, writing a letter (to an editor) or a memo to the principal is highly authentic as letters and memos are common work products. However, writing a five-paragraph paper is not as authentic as such papers are not used in the world of work. However, a five paragraph paper is a complex task and would typically be classified as a performance assessment.

Advantages and disadvantages

There are several advantages of performance assessments (Linn & Miller 2005). First, the focus is on complex learning outcomes that often cannot be measured by other methods. Second, performance assessments typically assess process or procedure as well as the product. For example, the teacher can observe if the students are repairing the machine using the appropriate tools and procedures as well as whether the machine functions properly after the repairs. Third, well designed performance assessments communicate the instructional goals and meaningful learning clearly to students. For example, if the topic in a fifth grade art class is one-point perspective the performance assessment could be drawing a city scene that illustrates one point perspective. (http://www.sanford-artedventures.com). This assessment is meaningful and clearly communicates the learning goal. This performance assessment is a good instructional activity and has good content validity—common with well designed performance assessments (Linn & Miller 2005).

One major disadvantage with performance assessments is that they are typically very time consuming for students and teachers. This means that fewer assessments can be gathered so if they are not carefully devised fewer learning goals will be assessed—which can reduce content validity. State curriculum guidelines can be helpful in determining what should be included in a performance assessment. For example, Eric, a dance teacher in a high school in Tennessee learns that the state standards indicate that dance students at the highest level should be able to do demonstrate consistency and clarity in performing technical skills by:

-

-

- performing complex movement combinations to music in a variety of meters and styles

- performing combinations and variations in a broad dynamic range

- demonstrating improvement in performing movement combinations through self-evaluation

- critiquing a live or taped dance production based on given criteria (http://www.tennessee.gov/education/ci/standards/music/dance912.shtml)

-

Eric devises the following performance task for his eleventh grade modern dance class.

In groups of 4-6 students will perform a dance at least 5 minutes in length. The dance selected should be multifaceted so that all the dancers can demonstrate technical skills, complex movements, and a dynamic range (Items 1-2). Students will videotape their rehearsals and document how they improved through self evaluation (Item 3). Each group will view and critique the final performance of one other group in class (Item 4). Eric would need to scaffold most steps in this performance assessment. The groups probably would need guidance in selecting a dance that allowed all the dancers to demonstrate the appropriate skills; critiquing their own performances constructively; working effectively as a team, and applying criteria to evaluate a dance.

Another disadvantage of performance assessments is they are hard to assess reliably which can lead to inaccuracy and unfair evaluation. As with any constructed response assessment, scoring rubrics are very important. An example of holistic and analytic scoring rubrics designed to assess a completed product are in Table 39 and Table 40. A rubric designed to assess the process of group interactions is in Table 41.

Table 41: Example of group interaction rubric

|

Score |

Time management |

Participation and performance in roles |

Shared involvement |

|

0 |

Group did not stay on task and so task was not completed. |

Group did not assign or share roles. |

Single individual did the task. |

|

1 |

Group was off-task the majority of the time but task was completed. |

Groups assigned roles but members did not use these roles. |

Group totally disregarded comments and ideas from some members. |

|

2 |

Group stayed on task most of the time. |

Groups accepted and used some but not all roles. |

Group accepted some ideas but did not give others adequate consideration. |

|

3 |

Group stayed on task throughout the activity and managed time well. |

Group accepted and used roles and actively participated. |

Groups gave equal consideration to all ideas. |

|

4 |

Group defined their own approach in a way that more effectively managed the activity. |

Group defined and used roles not mentioned to them. Role changes took place that maximized individuals’ expertise. |

Groups made specific efforts to involve all group members including the reticent members. |

|

Source: Adapted from Group Interaction ( GI) SETUP ( 2003). Issues, Evidence and You. Ronkonkomo, NY Lab-Aids. (http://cse.edc.org/products/assessment/middleschool/scorerub.asp)) |

|||

This rubric was devised for middle grade science but could be used in other subject areas when assessing group process. In some performance assessments several scoring rubrics should be used. In the dance performance example above Eric should have scoring rubrics for the performance skills, the improvement based on self evaluation, the team work, and the critique of the other group. Obviously, devising a good performance assessment is complex and Linn and Miller (2005) recommend that teachers should:

-

-

- Create performance assessments that require students to use complex cognitive skills. Sometimes teachers devise assessments that are interesting and that the students enjoy but do not require students to use higher level cognitive skills that lead to significant learning. Focusing on high level skills and learning outcomes is particularly important because performance assessments are typically so time consuming.

- Ensure that the task is clear to the students. Performance assessments typically require multiple steps so students need to have the necessary prerequisite skills and knowledge as well as clear directions. Careful scaffolding is important for successful performance assessments.

-

-

-

- Specify expectations of the performance clearly by providing students scoring rubrics during the instruction. This not only helps students understand what it expected but it also guarantees that teachers are clear about what they expect. Thinking this through while planning the performance assessment can be difficult for teachers but is crucial as it typically leads to revisions of the actual assessment and directions provided to students.

-

-

-

- Reduce the importance of unessential skills in completing the task. What skills are essential depends on the purpose of the task. For example, for a science report, is the use of publishing software essential? If the purpose of the assessment is for students to demonstrate the process of the scientific method including writing a report, then the format of the report may not be significant. However, if the purpose includes integrating two subject areas, science and technology, then the use of publishing software is important. Because performance assessments take time it is tempting to include multiple skills without carefully considering if all the skills are essential to the learning goals.

-

Portfolios

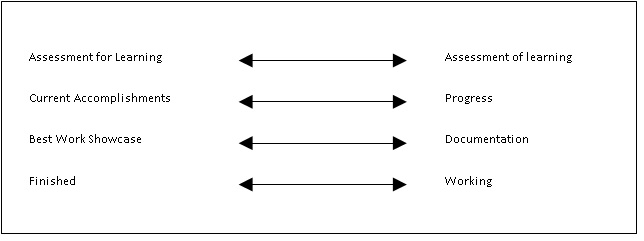

Working Finished Documentation Best Work ShowcaseProgress Current AccomplishmentsAssessment of learning Assessment for Learning

“A portfolio is a meaningful collection of student work that tells the story of student achievement or growth” (Arter, Spandel, & Culham, 1995, p. 2). Portfolios are a purposeful collection of student work not just folders of all the work a student does. Portfolios are used for a variety of purposes and developing a portfolio system can be confusing and stressful unless the teachers are clear on their purpose. The varied purposes can be illustrated as four dimensions (Linn & Miller 2005)

When the primary purpose is assessment for learning, the emphasis is on student self-reflection and responsibility for learning. Students not only select samples of their work they wish to include, but also reflect and interpret their own work. Portfolios containing this information can be used to aid communication as students can present and explain their work to their teachers and parents (Stiggins, 2005). Portfolios focusing on assessment of learning contain students’ work samples that certify accomplishments for a classroom grade, graduation, state requirements etc. Typically, students have less choice in the work contained in such portfolios as some consistency is needed for this type of assessment. For example, the writing portfolios that fourth and seventh graders are required to submit in Kentucky must contain a self-reflective statement and an example of three pieces of writing (reflective, personal experience or literary, and transactive). Students do choose which of their pieces of writing in each type to include in the portfolio.

(http://www.kde.state.ky.us/KDE/Instructional+Resources/Curriculum+Documents+and+Resources/Student+Performance+Standards/)

Portfolios can be designed to focus on student progress or current accomplishments. For example, audio tapes of English language learners speaking could be collected over one year to demonstrate growth in learning. Student progress portfolios may also contain multiple versions of a single piece of work. For example, a writing project may contain notes on the original idea, outline, first draft, comments on the first draft by peers or teacher, second draft, and the final finished product (Linn & Miller 2005). If the focus is on current accomplishments, only recent completed work samples are included.

Portfolios can focus on documenting student activities or highlighting important accomplishments. Documentation portfolios are inclusive containing all the work samples rather than focusing on one special strength, best work, or progress. In contrast, showcase portfolios focus on best work. The best work is typically identified by students. One aim of such portfolios is that students learn how to identify products that demonstrate what they know and can do. Students are not expected to identify their best work in isolation but also use the feedback from their teachers and peers.

A final distinction can be made between a finished portfolio—maybe used to for a job application—versus a working portfolio that typically includes day-to-day work samples. Working portfolios evolve over time and are not intended to be used for assessment of learning. The focus in a working portfolio is on developing ideas and skills so students should be allowed to make mistakes, freely comment on their own work, and respond to teacher feedback (Linn & Miller, 2005). Finished portfolios are designed for use with a particular audience and the products selected may be drawn from a working portfolio. For example, in a teacher education program, the working portfolio may contain work samples from all the courses taken. A student may develop one finished portfolio to demonstrate she has mastered the required competencies in the teacher education program and a second finished portfolio for her job application.

Advantages and disadvantages

Portfolios used well in classrooms have several advantages. They provide a way of documenting and evaluating growth in a much more nuanced way than selected response tests can. Also, portfolios can be integrated easily into instruction, i.e. used for assessment for learning. Portfolios also encourage student self-evaluation and reflection, as well as ownership for learning (Popham, 2005). Using classroom assessment to promote student motivation is an important component of assessment for learning which is considered in the next section.

However, there are some major disadvantages of portfolio use. First, good portfolio assessment takes an enormous amount of teacher time and organization. The time is needed to help students understand the purpose and structure of the portfolio, decide which work samples to collect, and to self reflect. Some of this time needs to be conducted in one-to-one conferences. Reviewing and evaluating the portfolios out of class time is also enormously time consuming. Teachers have to weigh if the time spent is worth the benefits of the portfolio use.

Second, evaluating portfolios reliability and eliminating bias can be even more difficult than in a constructed response assessment because the products are more varied. The experience of the state-wide use of portfolios for assessment in writing and mathematics for fourth and eighth graders in Vermont is sobering. Teachers used the same analytic scoring rubric when evaluating the portfolio. In the first two years of implementation samples from schools were collected and scored by an external panel of teachers. In the first year the agreement among raters (i.e. inter-rater reliability) was poor for mathematics and reading; in the second year the agreement among raters improved for mathematics but not for reading. However, even with the improvement in mathematics the reliability was too low to use the portfolios for individual student accountability (Koretz, Stecher, Klein & McCaffrey, 1994). When reliability is low, validity is also compromised because unstable results cannot be interpreted meaningfully.

If teachers do use portfolios in their classroom, the series of steps needed for implementation are outlined in Table 36. If the school or district has an existing portfolio system these steps may have to be modified.

Table 42: Steps in implementing a classroom portfolio program

|

1. Make sure students own their portfolios. |

Talk to your students about your ideas of the portfolio, the different purposes, and the variety of work samples. If possible, have them help make decisions about the kind of portfolio you implement. |

|

2. Decide on the purpose. |

Will the focus be on growth or current accomplishments? Best work showcase or documentation? Good portfolios can have multiple purposes but the teacher and students need to be clear about the purpose. |

|

3. Decide what work samples to collect, |

For example, in writing, is every writing assignment included? Are early drafts as well as final products included? |

|

4. Collect and store work samples, |

Decide where the work sample will be stored. For example, will each student have a file folder in a file cabinet, or a small plastic tub on a shelf in the classroom? |

|

5. Select criteria to evaluate samples, |

If possible, work with students to develop scoring rubrics. This may take considerable time as different rubrics may be needed for the variety of work samples. If you are using existing scoring rubrics, discuss with students possible modifications after the rubrics have been used at least once. |

|

6. Teach and require students conduct self evaluations of their own work, |

Help students learn to evaluate their own work using agreed upon criteria. For younger students, the self evaluations may be simple (strengths, weaknesses, and ways to improve); for older students a more analytic approach is desirable including using the same scoring rubrics that the teachers will use. |

|

7. Schedule and conduct portfolio conferences , |

Teacher-student conferences are time consuming but conferences are essential for the portfolio process to significantly enhance learning. These conferences should aid students’ self evaluation and should take place frequently. |

|

8. Involve parents. |

Parents need to understand the portfolio process. Encourage parents to review the work samples. You may wish to schedule parent, teacher-students conferences in which students talk about their work samples. |

|

Source: Adapted from Popham (2005) |

|

Assessment that enhances motivation and student confidence

Studies on testing and learning conducted more than 20 years ago demonstrated that tests promote learning and that more frequent tests are more effective than less frequent tests (Dempster & Perkins, 1993). Frequent smaller tests encourage continuous effort rather than last minute cramming and may also reduce test anxiety because the consequences of errors are reduced. College students report preferring more frequent testing than infrequent testing (Bangert-Downs, Kulik, Kulik, 1991). More recent research indicates that teachers’ assessment purpose and beliefs, the type of assessment selected, and the feedback given contributes to the assessment climate in the classroom which influences students’ confidence and motivation. The use of self-assessment is also important in establishing a positive assessment climate.

Teachers’ purposes and beliefs