29 SPECTRAL THEOREM

29.1. Eigenvalues and eigenvectors of symmetric matrices

Let ![]() be a square,

be a square, ![]() symmetric matrix. A real scalar

symmetric matrix. A real scalar ![]() is said to be an eigenvalue of

is said to be an eigenvalue of ![]() if there exists a non-zero vector

if there exists a non-zero vector ![]() such that

such that

![]()

The vector ![]() is then referred to as an eigenvector associated with the eigenvalue

is then referred to as an eigenvector associated with the eigenvalue ![]() . The eigenvector

. The eigenvector ![]() is said to be normalized if

is said to be normalized if ![]() . In this case, we have

. In this case, we have

![]()

The interpretation of ![]() is that it defines a direction along

is that it defines a direction along ![]() behaves just like scalar multiplication. The amount of scaling is given by

behaves just like scalar multiplication. The amount of scaling is given by ![]() . (In German, the root ‘‘eigen’’, means ‘‘self’’ or ‘‘proper’’). The eigenvalues of the matrix

. (In German, the root ‘‘eigen’’, means ‘‘self’’ or ‘‘proper’’). The eigenvalues of the matrix ![]() are characterized by the characteristic equation

are characterized by the characteristic equation

![]()

where the notation ![]() refers to the determinant of its matrix argument. The function, defined by

refers to the determinant of its matrix argument. The function, defined by ![]() , is a polynomial of degree

, is a polynomial of degree ![]() called the characteristic polynomial.

called the characteristic polynomial.

From the fundamental theorem of algebra, any polynomial of degree ![]() has

has ![]() (possibly not distinct) complex roots. For symmetric matrices, the eigenvalues are real, since

(possibly not distinct) complex roots. For symmetric matrices, the eigenvalues are real, since ![]() when

when ![]() , and

, and ![]() is normalized.

is normalized.

29.2. Spectral theorem

An important result of linear algebra called the spectral theorem, or symmetric eigenvalue decomposition (SED) theorem, states that for any symmetric matrix, there are exactly ![]() (possibly not distinct) eigenvalues, and they are all real; further, that the associated eigenvectors can be chosen so as to form an orthonormal basis. The result offers a simple way to decompose the symmetric matrix as a product of simple transformations.

(possibly not distinct) eigenvalues, and they are all real; further, that the associated eigenvectors can be chosen so as to form an orthonormal basis. The result offers a simple way to decompose the symmetric matrix as a product of simple transformations.

Theorem: Symmetric eigenvalue decomposition

|

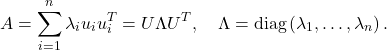

We can decompose any symmetric matrix where the matrix of |

Here is a proof. The SED provides a decomposition of the matrix in simple terms, namely dyads.

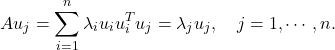

We check that in the SED above, the scalars ![]() are the eigenvalues, and

are the eigenvalues, and ![]() ‘s are associated eigenvectors, since

‘s are associated eigenvectors, since

The eigenvalue decomposition of a symmetric matrix can be efficiently computed with standard software, in time that grows proportionately to its dimension ![]() as

as ![]() .

.

Example: Eigenvalue decomposition of a ![]() symmetric matrix.

symmetric matrix.

29.3. Rayleigh quotients

Given a symmetric matrix ![]() , we can express the smallest and largest eigenvalues of

, we can express the smallest and largest eigenvalues of ![]() , denoted

, denoted ![]() and

and ![]() respectively, in the so-called variational form

respectively, in the so-called variational form

![]()

For proof, see here.

The term ‘‘variational’’ refers to the fact that the eigenvalues are given as optimal values of optimization problems, which were referred to in the past as variational problems. Variational representations exist for all the eigenvalues but are more complicated to state.

The interpretation of the above identities is that the largest and smallest eigenvalues are a measure of the range of the quadratic function ![]() over the unit Euclidean ball. The quantities above can be written as the minimum and maximum of the so-called Rayleigh quotient

over the unit Euclidean ball. The quantities above can be written as the minimum and maximum of the so-called Rayleigh quotient ![]() .

.

Historically, David Hilbert coined the term ‘‘spectrum’’ for the set of eigenvalues of a symmetric operator (roughly, a matrix of infinite dimensions). The fact that for symmetric matrices, every eigenvalue lies in the interval ![]() somewhat justifies the terminology.

somewhat justifies the terminology.